In my previous article I have shown you how to use power automate to extract data using data management package API. In this article we will see how to use logic apps for exporting data package using recurring integrations.

You need following things for doing configuration to extract data from F&O using logic apps and Data packages API

- Configure data project and recurring data job in D365 Finance and operations.

- App registration to get the client Id and secrete

- Azure subscription to create logic apps.

The below link explains how to create data project and configure recurring data jobs

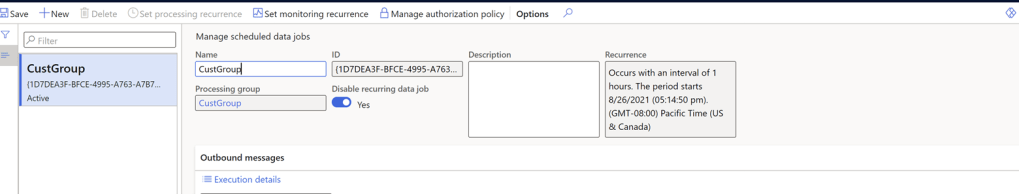

I have created and configure following recurring data job in my environment. This data job is created for custgroup Table. Which will export all the customer groups. This can be configured for any data entity which is enabled for data management.

Once you configure your data project you can get the dequeue API for export using activity Id.

https://<base URL>/api/connector/dequeue/<activity ID>

The next step is creating logic apps which will download package created by recurring data job configured.

You can learn about creating logic apps from the following documentation.

https://docs.microsoft.com/en-us/azure/logic-apps/quickstart-create-first-logic-app-workflow

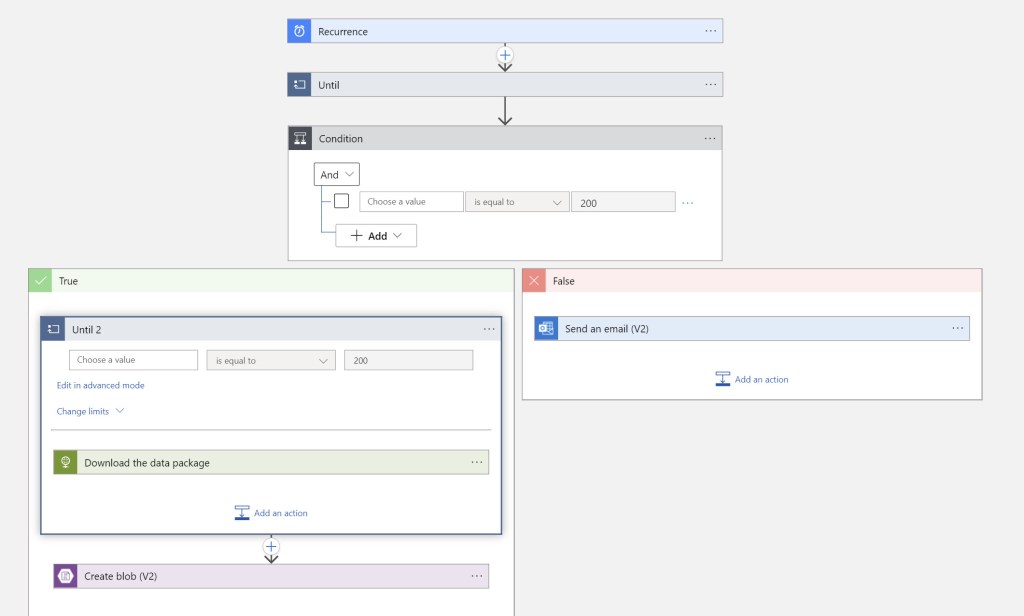

High level steps for the integration.

- Create recurring data job and set recurrence.

- Create logic apps using azure portal

- Set manual trigger for the logic app

- Give the call to recurring data job endpoint using Http trigger to get the location of the package which we want to download

- Once we get the download location, download the package using another http call.

- Once the call is successful write down the data package to the blob location.

Let’s take a look at step 4,5 and 6 in detail now.

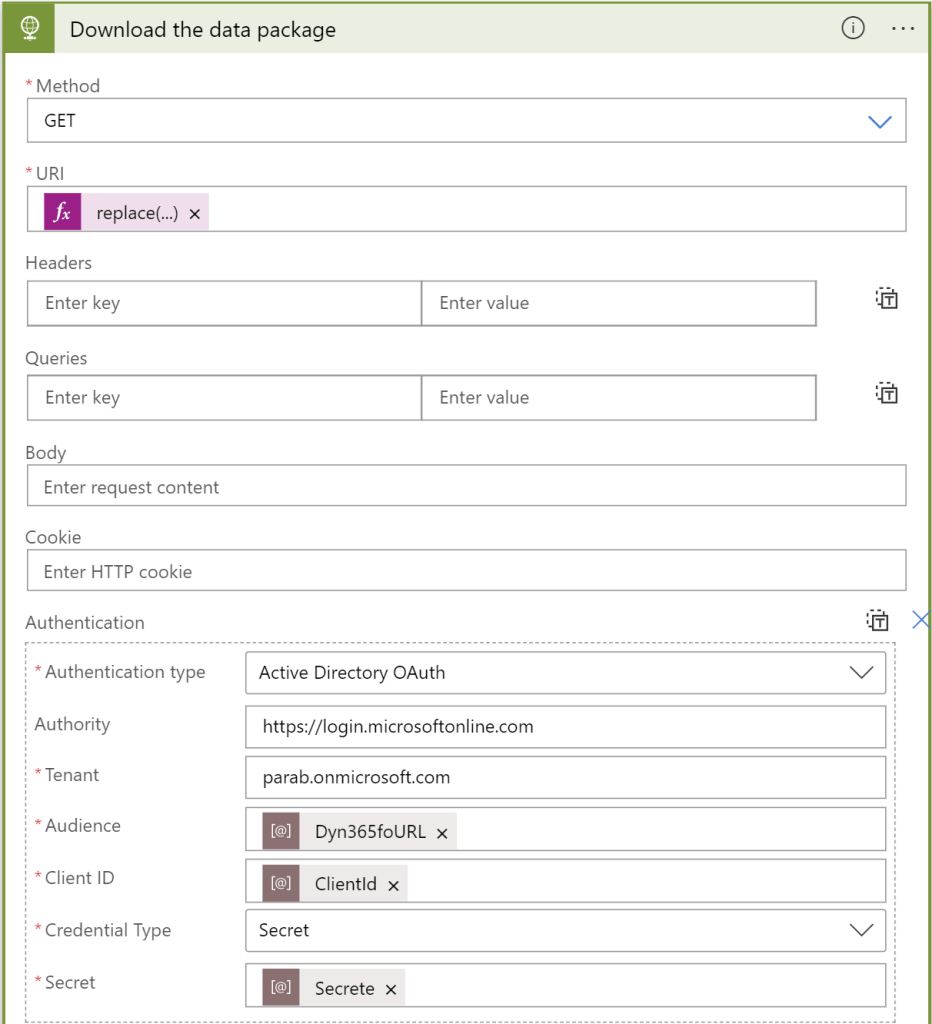

In the step 4 shown below, we are getting the download location by doing get call the dequeue endpoint.

The output of this step looks like below

{

“CorrelationId”: “d093632e-878c-4201-86e8-e87c849bbb85”,

“PopReceipt”: “AgAAAAMAAAAAAAAA7vannRSl1wE=”,

“IsDownLoadFileExist”: true,

“FileDownLoadErrorMessage”: null,

“LastDequeueDateTime”: null

}

Using the download location from the above step, we are going to download file using another Http trigger as shown below in step 5. You can use data operations parse JSON action to get the URL or directly use the expression below to get the URL

replace(replace(body(‘HTTP’)[‘DownloadLocation’],”’http:’,’https’),’:80′,’:443′)

The last step is to move the data package we downloaded using above action to blob container. I have connection to my blob location where I specified my container name d365files and provided name of data package and the blob content as body received from http trigger.

Once we execute this the extracted data package by recurring data job is moved to blob storage and the status in F&O changes to downloaded for the on the recurring data job.

That’s all for now. In the next article we will see how to Import data using power automate and import package API.