Dynamics 365 for Finance and Operations apps offers multiple integration patterns like Power platform integration , custom service , OData, Batch Data API etc.

Using Batch data API integration can be performed using two different ways

Batch data API is an asynchronous integration patterns where we send the request to API synchronously and get the execution ID from the job task created in the system. Data project job created after calling API is executed in batch mode and data is imported or exported.

In this blog we will see how we can use Power automate and data management package REST API to export data package to the Azure blob storage.

For the demonstration purpose we will take an example of the data entity CustCustomerReasonEntity which is created for importing or exporting reason codes data.

This entity is marked as data management enabled and public so that it can be called from any middleware.

First thing you need for calling entity using data package API for export or import is data project. Visit data management workspace and create data project as shown in below image. I have selected CSV as a data format for export.

Now it’s the time for configuring our power automate flow which will use data management package rest API to extract the data package. You can check below image where all the steps are configured. We will go through details of each step to understand what each step is doing.

The first step is to trigger the flow manually. In the second step I am giving call to ExportToPackage API. As you can see below I have specified F&O instance URL and definition group/Data project CustomerReasonCodes.

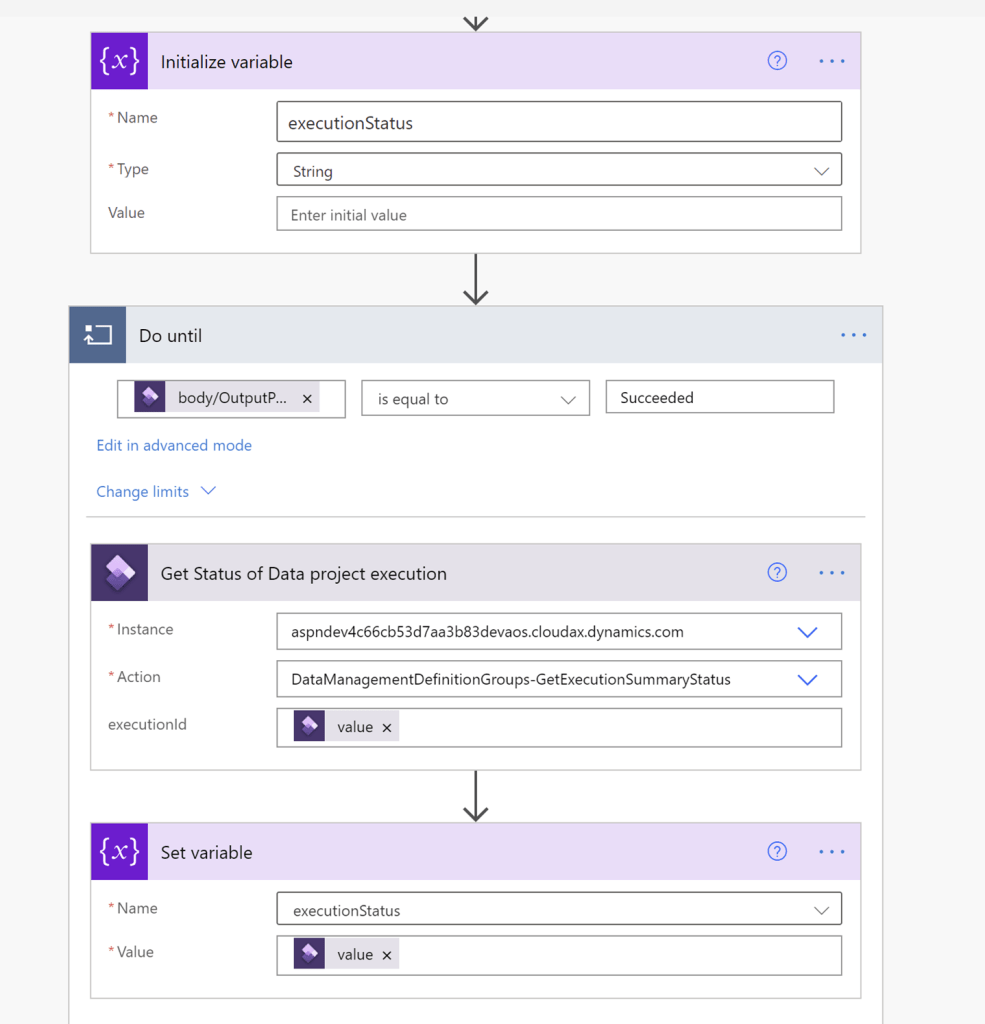

The following three steps are used for checking the status of the data project execution triggered in the above step. Data package is not going to be present in the blob storage unless execution is succeeded. So call to get execution status AP is done in the loop till the time API returns succeeded as output.

Remember to pass execution ID returned from the exportToPackage API as a parameter in this step.

Once data project execution is successful, we will use the same execution ID to retrieve the blob URL which we will use to download the package using next step. In this step I am giving call to GetExportedPackageUrl API to get the blob URL.

Following step is to download package from the blob URL we have retrieved in the step above. To do that simply use the HTTP trigger GET call to the value (URL) from the step above.

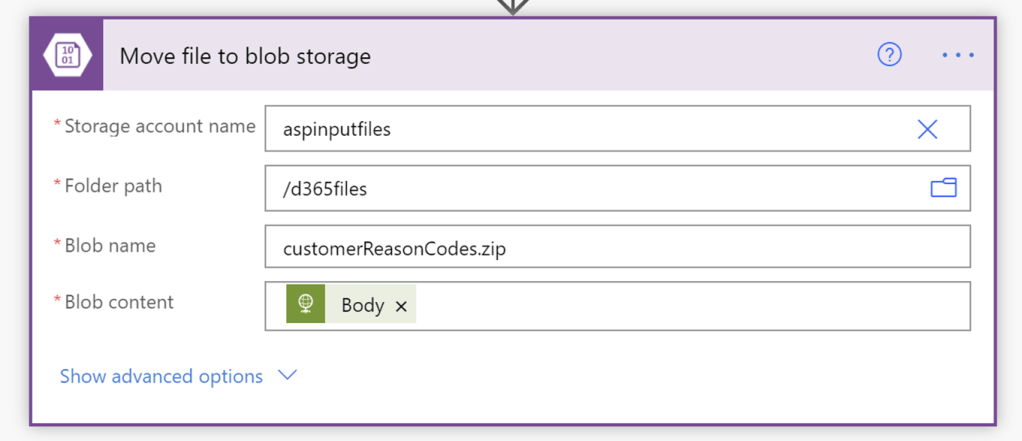

In the next step, I am moving downloaded package to the blob location. Search for Azure blob storage trigger and select create blob V2 action , give the name for file and body received in the step above to write content in the file.

As you can see in the below screen, once I executed this flow successfully package is exported to blob storage.

You can see the content of the extracted zip folder in the below screen.

You can see the content of the customer reasons file in the below screen shot.

That’s all for now. In the next article we will see how to use logic apps and Recurring integrations API for exporting data out of D365 for Finance and Operations.